Javascript Procedural Sound Experiments: Rain & Wind [ProcessingJS + WebPd]

If working with sound on the web, it is common to use sound samples for events in games or rich content websites, but it is rarely possible to make procedural sound synthesis for different situations. In games there are often easier to use artificial, procedural sounds for rain, glass breaking, wind, sea etc. because of the ease of changing their parameters on the fly. I was really excited when I discovered a small project called WebPd that is about to port the Pure Data sound engine to pure JavaScript and make it possible to use directly on websites without plugins. This script is initiated by Chris McCormick and is based on the Mozilla Audio Data API that is under intense development by the Mozilla Dev team: at the moment it is working with Firefox 4+.

Also - being a small project - there are only a few, very basic Pd objects implemented at the moment. For a basic sound synthesis you can use a few sound generator objects with pd (such as osc~, phasor~, noise), but there is no really timing objects (delay, timer, etc) so all event handling must be solved within the Javascript side and only triggered synthesis commands can be left for the Pd side. If you are familiar developing projects with libPd for Android, or ofxPd for OpenFrameworks, you may know the situation.

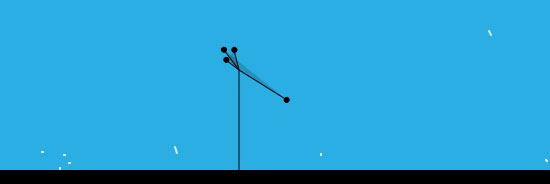

So here I made a small test composition of a landscape that has some procedural audio elements: rain and wind. The rain is made with random pitched oscillators with very tiny ADSR events (which is in fact only A/R event that is called within the Javascript code and the actual values are passed directly to a line~ object in Pd). Density of the rain is changing with some random factor, giving a more organic vibe for the clean artificial sounds. The wind is made with a noise object that is filtered continuously with a high pass filter object. The amount of filtering is depending on the size (area) of the triangle that is visible in the landscape as an “air sleeve”.

If you would like to create parallel, polyphonic sounds, it might be a good idea to instantiate more Pd classes for different sound events. When I declared a single Pd instance only, not all the messages were sent through the Pd patch: messages that I sent in every frame (for continuous noise filtering of the ‘wind’) ‘clashed’ with discrete, separate messages that were sent when the trigger() function has been called for the raindrops. So I ended up using two Pd engines at the same time in the setup():

pd_rain = new Pd(44100, 200);

pd_rain.load("rain.pd", pd_rain.play);

pd_wind = new Pd(44100, 200);

pd_wind.load("wind.pd", pd_wind.play);

This way, accurate values are sent to each of the pd engines. try out the demo here (you need Firefox 4+)

For user input and visual display I am using the excellent ProcessingJS library which makes possible to draw & interact easily with the html5 canvas tag directly using Processing syntax. The response time (latency) is quite good, sound signals are rarely have drop outs, which is really impressive if you have some experiments with web based sound wave generation.

Permutation

Permutation is a sound instrument developed for rotating, layering and modifying sound samples. Its structure is originally developed for a small midi controller that has a few knobs and buttons. It is then mounted on a broken piano, along several contact microphones. The basic idea of using a dead piano as an untempered, raw sound source is reflecting to the central state of this instrument in the context of western music during the past few hundred years and so. Contact mikes, prepared pianos as we know are representing a constant dialogue between classical music theory and other more recent free forms of artistic and philosophical representations. The aim of Permutation however is a bit different from this phenomenon. It is a temporal extension of gestures made on the whole resonating body of a uniquely designed sound architecture (the grand piano). In fact it is so well designed that it used to be as a simulation tool for composers to mimic a whole group of musicians. A broken, old wood structure has many identical sound deformations that it becomes an intimate space for creating strange and sudden sounds that can be rotated back and folded back onto itself.

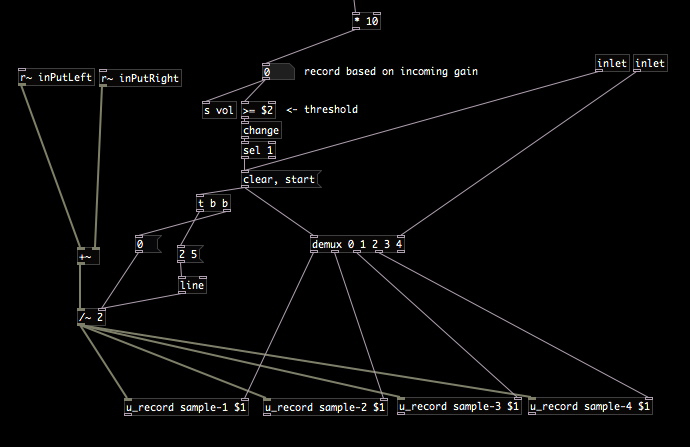

The system is built for live performances and had been used for making music for the Artus independent theatre company. The pure data patch can be downloaded from here. Please note it had been rapidly developed to be used with a midi controller so the patch may confuse someone. The patch contains some code from the excellent rjdj library at Github.

Aether

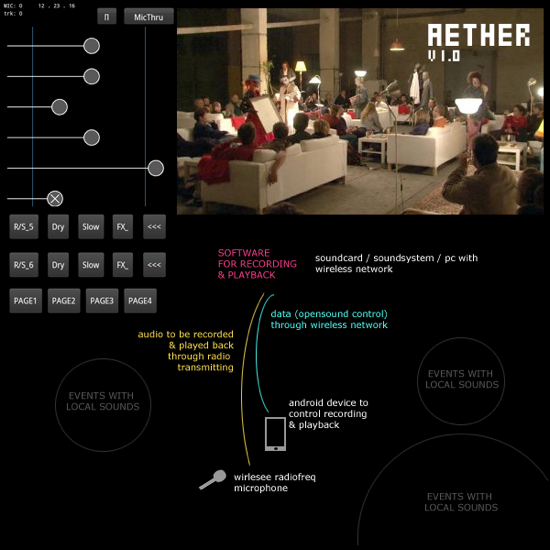

Aether is a wireless remote application developed for collecting spatially distributed sound memories. Its aim is to collect and use sound events on the fly. The client interface is shown on the left, the performance environment we are using it in can be seen on the right, a diagram of the setup is on the bottom of the image.

While walking around a larger space (that is coverable by an ordinary wireless network) one can send / receive realtime data between the client app and the sound system. All the logic, synthesis, processing is happening in the network provider server (a laptop located somewhere in space) while control, interaction and site-specific probability happens on the portable, site dependent device. The system was used for several performances of the Artus independent theatre company where action, movement had to be recorded and played back immediately.

The client app is running on an Android device that is connected to a computer running Pure Data through local wireless network (using OSC protocol). The space for interaction and control can be extended to the limits of the wireless network coverage and the maximum distance of the radio frequency microphone and its receiver. The system had been tested and used in a factory building up to a 250-300 square meters sized area.

The client code is based on an excellent collection of Processing/Android examples by ~popcode that is available to grab from Gitorious.

The source is available from here (note: the server Pd patch is not included hence its ad-hoc spaghettiness)

transform@lab

As a collaboration of several universities across Europe including Gobelins (Paris, F), University of Wales (Newport, UK) Moholy-Nagy University of Design & Arts (Budapest, H) Transformatlab aims to be a series of workshops related to cross media projects including design, emerging technologies, nonlinear narratives, gaming in our culture of the networked society. Diverse set of interaction systems were presented to workshop participants. We were introducing physical sensor capabilities of Android tablet devices, computer vision using Kinect. This example is one of the many projects that were developed during the workshop. This prototype has been developed within two days from scratch using the openkinect library wrapper for java.

No Copy Paste

live coding practice is an emerging field in contemporary digital performance culture. we have made several performances in~ and outside of the field with the no copy paste collective.

several performances can be observed at the no copy paste website.